Sell Your Memory Stocks

Custom HBM won't save you, margins are reverting, and trading a cyclical fab at 14.5x book value is pure financial suicide.

On 5 May, I argued that the HBM boom is temporary. The response was huge. Read it here if you haven’t already.

I wanted to write this short article to address the two strongest criticisms of the previous HBM analysis.

First, people asked for hard numbers instead of purely qualitative research. If memory margins are going to reset in 2028 onwards, where is the scenario analysis across DRAM, HBM, and NAND, with clear assumptions on demand and supply?

Second, people said I underplayed custom HBM. If memory is now being tailored for specific clients, does that not reduce commoditisation?

This article answers both questions.

If you read the first piece, you already know the core idea. Memory is not a bottleneck. Memory can be tight for a period, then loose again when capacity and yields improve. The whole debate is whether AI has permanently broken that cyclicality.

My view is still no. AI can raise demand, but it cannot repeal supply response.

The Bull Case

Before we test my view, we should present the strongest opposite case.

The bull case says memory is no longer a classic commodity. HBM is difficult to make, hard to package, and often co-designed with advanced AI chips. That creates delays, switching costs, and temporary pricing power. If supply takes time to catch up, margins can stay high for longer than old cycle models predict.

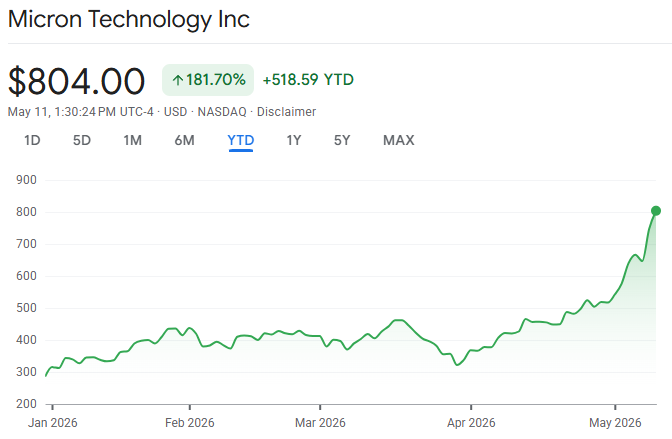

That is all true in the short run. This is why memory stocks have run so hard, and this run may continue for a while.

Where I disagree is what comes next.

The market is pricing today’s tight conditions as if they are permanent. They are not. In memory, permanent is a very dangerous word.

A Quick Explanation

Let me first explain what the terms I will be using in this article actually mean.

DRAM is working memory. Think of it as a desk where active work happens in real time. Your server, laptop, or phone pulls data into DRAM when it needs immediate access at high speed. DRAM is fast, but temporary. When power goes, the data on that desk disappears.

HBM is a premium version of DRAM built for AI workloads. Instead of laying memory chips flat, manufacturers stack them vertically and place them very close to the GPU or AI accelerator. That shorter distance means much faster data transfer and lower power per unit of performance. In simple terms, HBM helps feed the chip quickly enough so expensive compute cores are not left waiting for data.

NAND is storage memory. If DRAM is the desk, NAND is the filing cabinet. It holds data for the long term in SSDs, phones, and data centres. NAND is slower than DRAM for active computation, but it is cheaper per bit and persistent, which makes it great for storing models, datasets, and application data.

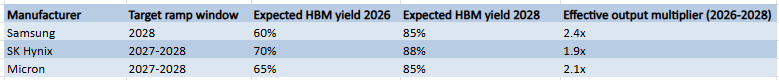

Yield is the percentage of manufactured chips that actually work and can be sold. This is one of the most important numbers in semiconductors. If a line runs at 60% yield, 40 out of every 100 units are effectively lost to defects or performance failures. If that same line improves to 85% yield, sellable output rises sharply without building a new fab. That is why yield improvement can create a hidden supply surge.

That last point is the most important for investors. Most people track new factory announcements because they are visible and dramatic. Far fewer track yields because they are boring. But both determine real supply, and real supply is what drives pricing power and margins at the end of the day.

Assumptions

I will use a bear, base, and bull case.

In the base case, AI demand remains strong through 2027, then grows at a slower pace as buyers start demanding actual returns on huge hardware spend.

I assume HBM yields improve meaningfully by 2028 because that is what usually happens when world-class engineering teams spend 18-24 months debugging a process.

I also assume announced capacity from Samsung, SK Hynix, and Micron starts contributing meaningfully in the 2027-2029 window.

None of this requires some sort of catastrophic collapse in AI. It only requires memory supply to normalise faster than the market expects.

Demand Scenarios

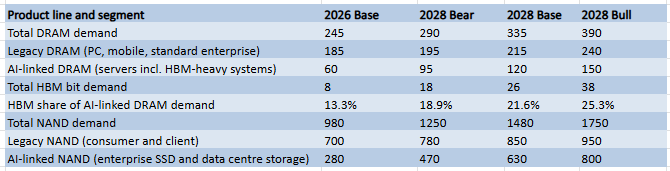

I have split legacy demand from AI demand in the table below for one simple reason: they do not move together.

Legacy demand still matters more than most people think because it is the broad base of memory consumption. AI is fast-growing, but consumer and everyday enterprise demand is still the foundation. When that foundation is uneven, total demand is less powerful than the share price of Micron would suggest.

Analyst forecasts for 2028 DRAM demand are clustering around the bull case, with most models assuming AI infrastructure spend remains largely unconstrained.

So the question is not whether demand is growing. It is. The real question is whether it is growing fast enough to absorb two things at once: more factories coming online and better manufacturing yields from the factories that already exist.

If demand cannot keep up with both, supply catches up, prices go down, and margins compress. That is the whole point of separating the two.

Supply Scenarios

Now the supply side.

The final column in the table above is the issue for these companies.

If a producer doubles effective output over two years through a mix of new lines and better yields, pricing power becomes harder to sustain unless demand scales at the same speed. Sometimes it does. Usually it does not for long.

This is why cycle turns always feel sudden. They are not random. They are maths.

What About Custom HBM?

Yes, custom HBM exists. It can support better margins on specific programmes because qualification is harder and switching suppliers is not as easy as with default HBM.

But custom HBM does not automatically make the whole memory sector a permanent moat.

First, custom projects make up a fairly small amount of total memory demand. The volume of truly bespoke, client-specific HBM is meaningful but still a fraction of everything Samsung, SK Hynix, and Micron actually ship. The bulk of production is still standard, price-sensitive, and subject to normal market forces.

Second, large buyers hate dependency risk. The hyperscalers are sophisticated enough to know that single-source supply chains are a liability, and they have the scale to do something about it. Over time they push for second sourcing, design flexibility, and industry standardisation, all of which gradually erode the leverage a supplier might have built up through early co-design work.

Third, even if premium custom products hold up well on pricing, sector-wide financial results still depend on blended pricing across all of HBM, regular DRAM, and NAND. A company cannot report earnings from its best programmes alone. If the broader market softens, the full income statement softens with it.

So customisation can delay pressure on certain product lines. It cannot eliminate pressure at the sector level if total sellable supply outruns total bit demand.

Base Case Outcomes

Here is the base-case for prices, margins, and valuation multiples.

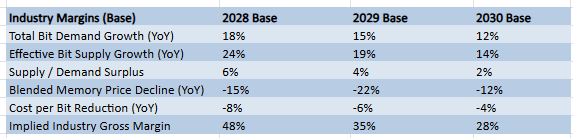

As you can see, even in a base case where AI demand grows a very healthy 12-18% YoY, effective supply grows faster due to new capacity and yield improvements. When supply outpaces demand, blended pricing compresses faster than cost-per-bit reductions can offset. This drags industry gross margins from their current peak back toward historical averages.

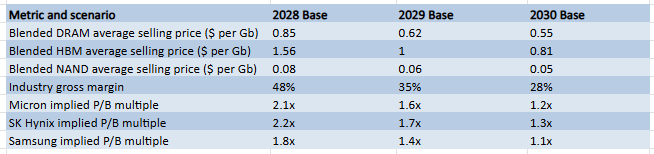

This table then shows what happens financially when the supply response described above meets moderating demand. Blended DRAM prices decline meaningfully from 2028 onwards as scarcity evaporates. HBM pricing, which currently sits at a significant premium, compresses as more supply enters and custom programmes gradually become a smaller share of total volume.

Gross margins across the industry revert from their current elevated levels back toward the long-run average, which history tells us sits in the high twenties to low thirties.

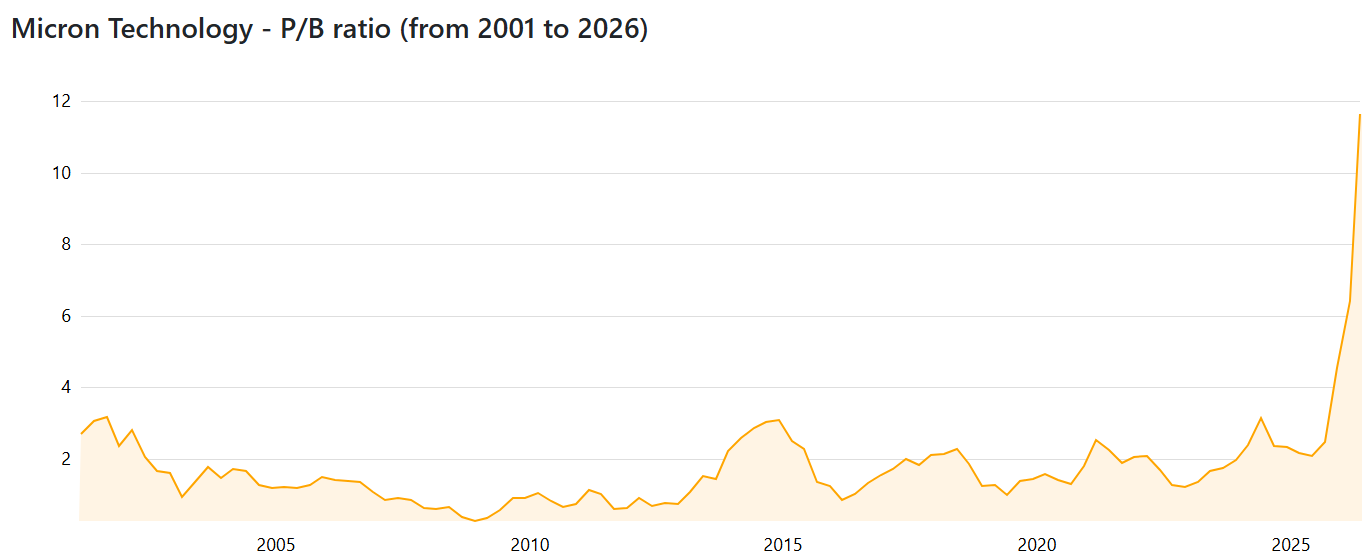

Memory companies are cyclical businesses. The best way to value them is on P/B multiples, because earnings are too volatile to anchor a DCF model at any stage of the cycle.

At the absolute bottom of previous cycles, these stocks have traded at 1-1.5x book value. At the euphoric peak, they have reached 2.5-3.0x.

Today, several of these companies are trading far above historical peak multiples, implying that current margins are permanent rather than cyclical.

The risk for investors is simple: you can get hit twice.

First by earnings pressure as prices normalise. Revenue may stay high, but margins compress and profits fall. Second by multiple compression as the market stops treating peak margins as permanent and reprices the stock as the cyclical business it actually is.

Both happen at the same time, because it is usually the same realisation that triggers both.

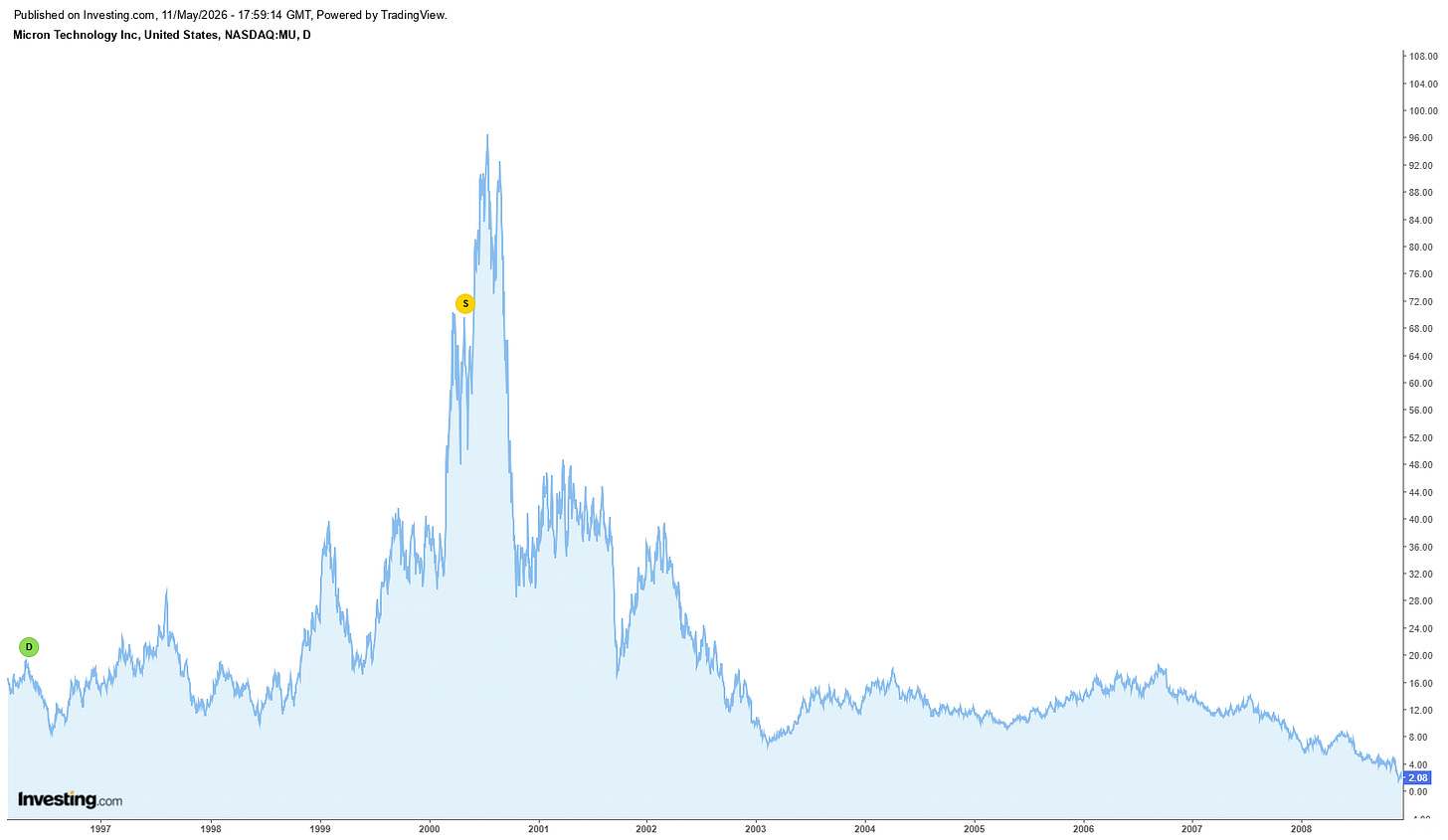

That double hit is not unusual. It is the standard reason for memory stocks dropping 60% from their peaks in every major cycle. The narrative sounds different each time but the underlying maths are really the same every single time.

You do not need to be a semiconductor engineer to understand this. Think of it like shipping rates, steel, or oil services in past cycles. Tight market, high prices, aggressive expansion, then normalisation when supply catches up.

AI changes the size of the wave. It does not remove the tide.

What Would Prove Me Wrong

I am wrong if advanced packaging and yield improvements stall for far longer than expected, keeping supply tighter into 2028 and beyond.

I am wrong if AI demand proves so deep and so durable that it absorbs each new wave of capacity without meaningful price pressure. That would require not just strong training demand, but sustained inference growth, rising memory content per deployed workload, and continued hyperscaler capex intensity well into the next decade.

I am wrong if Samsung, SK Hynix, and Micron all demonstrate unusual capital discipline at the top of the cycle, prioritising returns over market share and resisting the normal incentive to overbuild when margins are strongest. This would be a break from historical behaviour.

Those are the checkpoints to watch. If supply stays tight despite CapEx, if demand keeps outrunning new output, and if producer discipline holds at peak profitability, then the bear case weakens.

Thank you for reading, let me know what you think, and have a great day!

What are your thoughts on the fact that HBM takes at least 3x the clean room space as DRAM, and therefore that HBM capacity growth will cannibalize DRAM capacity?

There is a “risk” that each generation of GPUs (usually 2yrs cycle) suck up 1.5x or more memory for same compute GW. Thats your main risk scenario vs your thesis in my view.